CSE 673: Computational Vision - Spring 2013

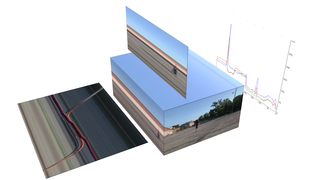

During this course we explore some of the underlying principles and algorithms that enable computers to see and reason spatially. This enables mobile robots to perceive the world around them in order to accomplish useful tasks such as navigating through the world, manipulating objects and interacting with humans. One good definition of robotics is the intelligent connection of the perception to action. From this it is clear what a vital role perception plays; as it comes first in the processing chain it is difficult to improve the latter aspects without functional perception.

We will work from research papers and reports rather than a required textbook.

- Times

- MoWeFr 13:00-13:50

- Location

- Davis 338A

- Course Website

- http://www.cse.buffalo.edu/~jryde/cse673

- Forum

- https://piazza.com/class#spring2013/cse673

- Instructor

- Julian Ryde (UBIT: jryde), Office 333, Davis Hall, 716 645 1590, jryde at buffalo dot edu

- Office hours

- TuTh 1200-1400 (Davis 333)

- Exam Schedule

- Midterm, Wednesday 6 March 2013, 13:00-13:50 in Davis 338A

- Final, Wednesday 8 May 2013, 11:45-14:45 in Norton 210

Lecture dates

| Wk | Start | Mon | Wed | Fri |

|---|---|---|---|---|

| 1 | Jan 14 | Introduction, 01 | Python for CV, PythonCV, PythonCV2 | |

| 2 | Jan 21 | No Class | Projective geometry, 02 | Stereopsis, 02 |

| 3 | Jan 28 | Correspondence, 03 | Shape from X, 03 | |

| 4 | Feb 04 | Passive 3-D Object recognition, 04 | ||

| 5 | Feb 11 | Pointclouds (J. Delmerico), PCL | ||

| 6 | Feb 18 | Optical flow, 05 | Structure from motion, 05 | |

| 7 | Feb 25 | FeatureDetection | ExamPrep | ExamPrep, Natural Vision, 06 |

| 8 | Mar 04 | Natural Vision, 06 | Midterm Exam | No Class |

| 9 | Mar 11 | Spring Break No Classes | ||

| 10 | Mar 18 | Purposive Vision, 07 | Egomotion estimation, 08 | Collision Avoidance, 08 |

| 11 | Mar 25 | Visual Servoing, 08 | Visual Homing, 08 | Vision for recognition, 09 |

| 12 | Apr 01 | Active Object Recognition, 09 | Active Object Recognition, 09 | Attentional control, 10 |

| 13 | Apr 08 | Mobile robot mapping, 11 | Student presentations | |

| 14 | Apr 15 | Student presentations | ||

| 15 | Apr 22 | Student presentations | ||

| 16 | Apr 29 | Final Exam preparation | Reading Days | |

See Projects for the project presentation schedule and the guidelines for the Presentations.

Course outline

The outline may change subject to the background knowledge, interest and rate of progress.

-

Introduction

- Introduction and motivation

- Python for computer vision

-

3-D Imaging Models

- Projective geom

- Stereopsis I

- Stereopsis II

-

Passive Shape Recovery

- Shape from shading I

- Shape from shading II

- Shape from X

- Correspondence

-

Vision for Navigation

- Egomotion estimation

- Collision Avoidance

- Visual Servoing

-

Passive 3-D Object Recognition

- 3-D shape models I

- 3-D shape models II

-

Range Sensors and Sensing

- RGB-D - Kinect/Asus Xtion

- Laser scanners

-

Perception for Mapping (SLAM)

- Random-Sample Consensus, RANSAC

- Iterative Closest Point, ICP

-

Point clouds and the pointcloud library, PCL

- Estimating surface normals from a pointcloud, PCL tutorial

-

Data structures for spatial representation

- Hashtables/hashing etc.

- Octrees

- Bloom Filter

-

Passive Motion Analysis

- Optical Flow

- Structure from Motion

Assessment Mechanism

The table below summarizes the contribution of each assessment element to the overall grade. The instructor reserves the right to make minor adjustments as necessary.

| Proportion | Duration (min) | Date | |

|---|---|---|---|

| Midterm Exam | 35% | 50 | 6 March |

| Final Exam | 35% | 180 | 8 May |

| Project intro., related work | 5% | 17 Feb | |

| Project final report | 15% | 1 May | |

| Presentations | 10% | 15 + 5 | See lecture dates |

The midterm and final exams are closed book, closed notes and will require handwritten answers. The midterm will cover the first half of the material and final will cover all the material. Each student will do a conference style presentation (15 minutes with 5 minutes for questions) of a conference paper related to the course material during the last weeks of class.

During the lectures example exam questions will be covered that will not be in the notes. Attendance at the student presentations is required. Attendance will be taken.

Project

The project report should be similar to a conference paper as submitted to IROS, ICRA, CVPR etc. The format should be eight pages double column and should adhere to the IEEE conference paper template. For an example outline of scientific paper see PaperStructure.

The introduction, related work and background material sections should be submitted by email as a pdf attachment by 23:59 17 Feb. Loss of 1% of entire course grade per day late. Please make sure the size of the pdf is below 10Mb reduce resolution or quality of images as necessary. Run pdffonts on the pdf file to check that all fonts are embedded and subsetted as per

IEEE submission FAQS.

The value of our courses, grades, degrees and research findings are dependent upon adherence to standards of ethical conduct. Projects will be checked for originality by submitting to a web service which searches for duplication of report sections with material available on the web, or previously submitted to this service for originality analysis.

Relevant Software Libraries and Tools

- OpenCV - Large C++/Python/C computer vision library

- PCL - Point cloud processing library

- Ndimage - python N-dimensional array library

- Ndvision - video processing based on N-dimensional arrays

- MROL - Multi-resolution occupied voxel lists for efficient environment representation and mapping

Related Reading

- An Invitation to 3-D Vision: From Images to Geometric Models - Yi Ma, Stefano Soatto, Jana Kosecka, S. Shankar Sastry

- Image Processing, Analysis, and Machine Vision - Milan Sonka, Vaclav Hlavac, Roger Boyle

-

OpenCV

- Learning OpenCV - Gary Bradski and Adrian Kaehler

- Open CV tutorials

- Making things see: 3D vision with Kinect, Processing, Arduino and MakerBot - Greg Borenstein, UB library copy

Related courses

Much of the lecture material is based on Peter Scott's previous course CSE668 which he most generously donated.

- ETH Zurich - Autonomous Mobile Robotics - R. Siegwart, M. Chli, M. Rufli and D. Scaramuzza

- Cambridge - Computer Vision and Robotics - R. Cipolla

Policies

See the following appropriate UB policy links.

- Disability

- http://www.student-affairs.buffalo.edu/ods/

- Counseling

- http://www.student-affairs.buffalo.edu/shs/ccenter/

- Academic Integrity

- http://www.cse.buffalo.edu/graduate/policies_acad_integrity.php

- http://www.ub-judiciary.buffalo.edu/art3a.shtml#integrity

- http://undergrad-catalog.buffalo.edu/policies/course/integrity.shtml